A NEW MODEL FOR SBCC CAPACITY STRENGTHENING

The Health Communication Capacity Collaborative (HC3) SBCC Capacity EcosystemTM is a model that reflects the systematic assessment, design and implementation of customized and strategic capacity strengthening for social and behavior change communication (SBCC). While arising from the work of HC3, it is a model that can be used by any project seeking to strengthen SBCC capacity at the local, regional or global level.

WHY AN “ECOSYSTEM”?

HC3 wants to disrupt the notion of linear or hierarchical change often associated with capacity strengthening initiatives. We know from experience that capacity strengthening is a dynamic process that involves many interacting agents. An “ecosystem” speaks to the inherently complex, interconnected and often unpredictable nature of capacity strengthening and to the dynamic human environments in which we work. It recognizes that one intervention is almost never enough to make change.

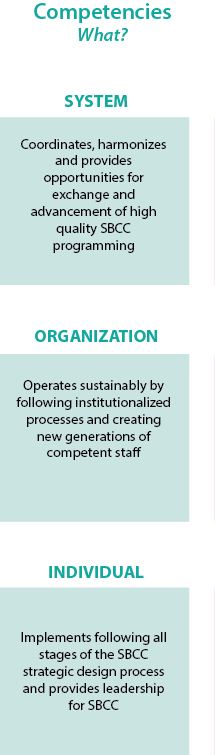

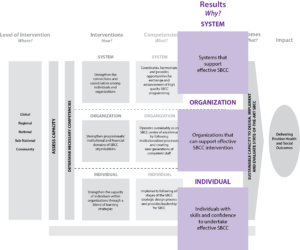

SYSTEMS, ORGANIZATIONS, INDIVIDUALS

At the heart of the ecosystem is the focus on the capacity strengthening of individuals, organizations and systems, which is also consistent with the socio-ecological model that guides SBCC program implementation. It is important to understand the interconnectedness of these three audiences: individuals function in organizations and organizations operate in systems. Systems are the “connective tissue” that link and support the organizations and the individuals.

ITERATIVE AND SYSTEMATIC

SBCC capacity strengthening is a thoughtfully planned and iterative process. Just as SBCC implementation follows a strategic design process, capacity strengthening follows a similar process of inquiry, development, implementation, evaluation and re-planning. Capacity strengthening is not a “one-off” training, but a planned program of activities based on in-depth knowledge of the beneficiaries and their needs and goals.

FOCUS ON RELATIONSHIPS

Capacity strengthening is not just a technical process but also a social process. Building trusting collaborative relationships is critical in an SBCC capacity strengthening program. Given this reality, country-based partners are best situated to lead capacity strengthening initiatives given their deep understanding of the cultural, political and social context and of the networks of relationships in which SBCC practitioners and organizations are embedded. Moreover, the ideal scenario is one in which the recipient of the capacity strengthening is not only fully engaged as an equal partner in their own capacity strengthening but drives the capacity strengthening agenda.

Exploring the Ecosystem

THE ECOSYSTEM’S MAJOR ELEMENTS: A BRIEF OVERVIEW

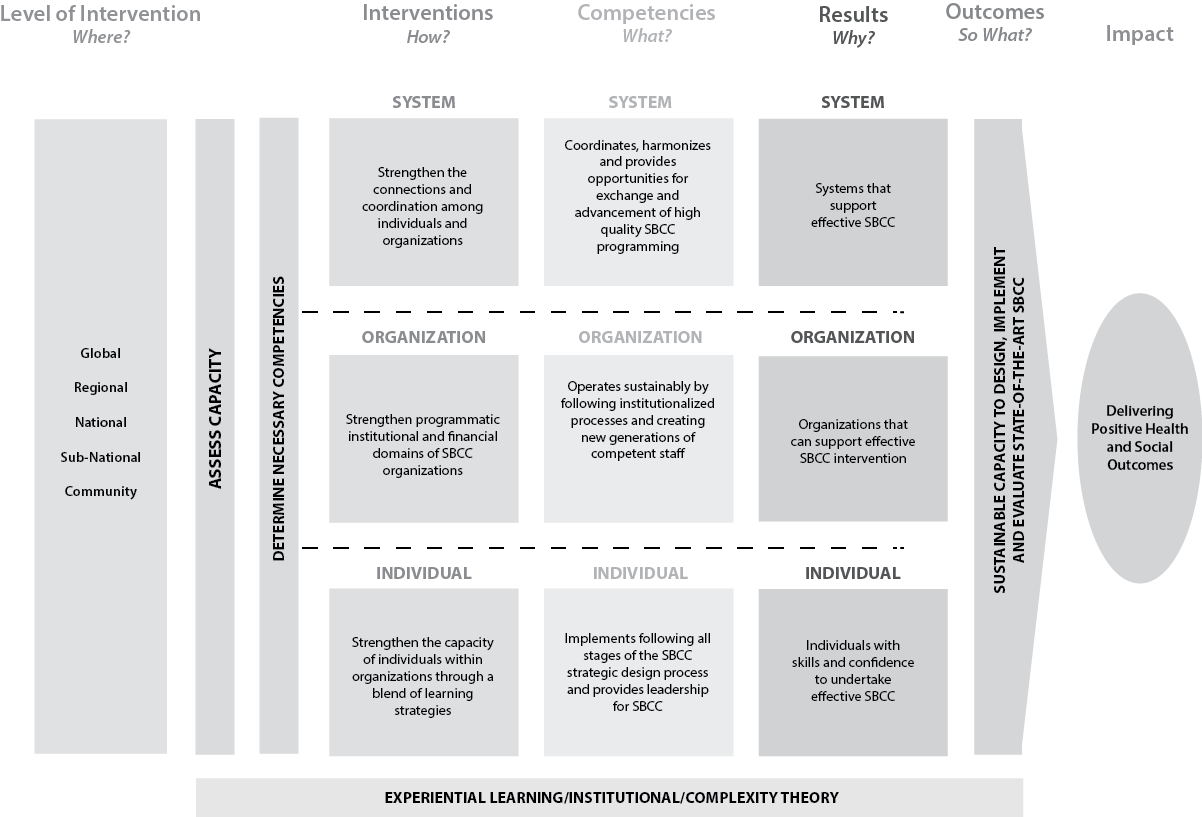

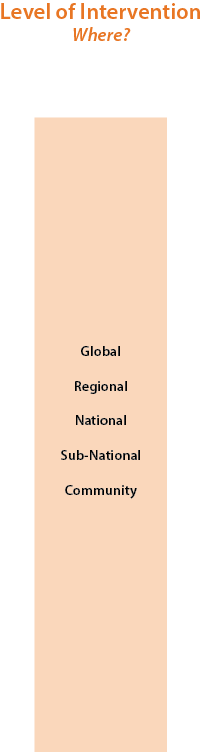

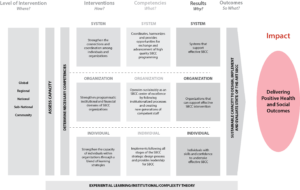

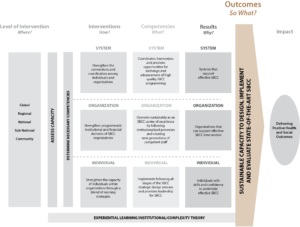

The ecosystem rests on a foundation which draws from three distinct but interrelated THEORIES. Then, moving from the left to the right of the model, the design of a capacity strengthening program begins with the identification of the LEVEL OF INTERVENTION (WHERE) at which capacity strengthening will focus and subsequently a capacity ASSESSMENT is conducted at the levels identified.

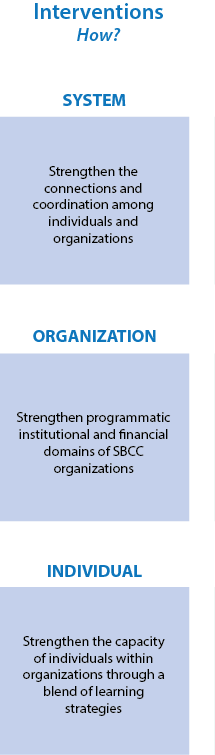

That assessment will determine the competencies that the capacity strengthening should build. With that information, INTERVENTIONS (HOW) are planned at the systems, organizational and/or individual levels. Those interventions are designed to affect the identified COMPETENCIES (WHAT) to achieve desired RESULTS (WHY). The collective effect of those achievements will lead to capacity building OUTCOMES which ultimately contribute to overall public health IMPACT.

WHERE ARE THE ARROWS IN THIS MODEL?

The ecosystem is deliberately non-directional – hence the absence of arrows. Experience shows that capacity strengthening does not always move in a forward linear progression. This can be true in advantageous or disadvantageous ways. For example, significant time may be spent building the technical skills of an NGO only to then witness high staff turnover. Major political upheaval in a country may disrupt systems-level capacity, sometimes for years at a time, taking existing capacity backwards.

Conversely, a dynamic and influential national or sub-national leader may emerge midway through a capacity program and expand its reach, causing it to be reevaluated for the better and to set sights higher than initially anticipated. Or another donor may fund a complementary capacity strengthening program that could synergize with an existing program, resulting in a dramatic increase in capacity.

A non-linear ecosystem approach is a reminder to remain flexible so as to respond thoughtfully and strategically to the emergence of these threats/opportunities.

STEP BY STEP

In a more detailed exploration of the model, it is useful to start at the far right. Knowing where a capacity strengthening program should go, it is easier to understand how to get there.

PROJECT-BASED LEARNING

In many instances, an SBCC project is asked to design, implement and evaluate SBCC projects in collaboration with local country-based partners. This collaborative implementation process offers tremendous opportunities to nurture SBCC knowledge and skills among local counterparts. However, merely engaging in collaborative implementation does not automatically increase capacity.

For example, simply inviting MOH staff to an SBCC strategy design workshop does not ensure staff will gain SBCC design skills. The collaborative implementation process must include a systematic plan for how capacity will be reinforced throughout the process. To encourage capacity building through the collaborative implementation process, HC3 developed a Project-Based Learning model, which includes experience, review and application.

Project-Based Learning takes advantage of collaborative implementation opportunities to reinforce learning. This experiential, interaction-based learning provides opportunities for professionals to immerse themselves in the process of gaining and applying knowledge directly to a relevant situation in the workplace, even in those cases where capacity strengthening may not be an explicit objective of the program as activities can be implemented at low or even no cost, such as facilitating discussion learning groups on Springboard.

Within a Project-Based Learning approach, professionals gain SBCC knowledge and skills as they are given time and space to practice an activity, reflect on it, and apply their learning alongside SBCC experts who can guide the process. The Project-Based Learning model follows three core steps:

- 1. Planning and Doing

- Perform. Do the activity. Plan for discovery. Create an experience.

- Utilize:

• Job aids

• Formal training/ Short course

• Workshops

• Guided discovery

• Structured discussion

• Professional networks

• Books/ Articles

• Video/ Podcasts

• Role-plays/ Drama activities

• Personal stories/Case studies

• Visualizations and imaginative activities

• Team games/ Problem-solving - For example, a project might work with the MOH to conduct a situation analysis or design a national SBCC strategy. Before performing these activities, provide materials or trainings to help the MOH staff prepare and plan for the activity.

- 2. Reviewing

- Share results, reactions, observations. Process by discussing. Look at experience, analyze, reflect.

- Utilize:

• After action reviews

• Briefing sessions

• Discussions/ Reflection in cooperative groups

• Small face-to-face group work

• Email/ Online discussion groups

• Professional networks

• Storytelling, sharing with others

• Reflective personal essays

• Thought questions

• Personal journals, diaries

• Portfolios

• (Participant) Presentations - For example, a project might organize cooperative discussion groups or Springboard forums to discuss the implementation process and what was learned.

- 3. Applying

- Generalize. Connect experience to real world. Apply learning to similar or different situation.

- Utilize:

• Application sessions

• Models, analogies and theory construction

• Coaching/mentoring sessions

• Portfolios

• New SBCC campaign design and implementation - For example, a project might provide mentorship and coaching as the MOH applies their learning to a new campaign. Or, HC3 might facilitate an after-action review to discuss how to apply new skills.

This three-step model can be applied at each of the five stages of the program implementation process to encourage learning through practical experience.

This three-step model can be applied at each of the five stages of the program implementation process to encourage learning through practical experience.

An SBCC project can support and facilitate the Project-Based Learning process by providing opportunities to apply the model, developing materials that support learning, offering feedback and encouraging supervisors to create situations to apply newfound learning.

WHAT IS THE TIME HORIZON FOR CAPACITY STRENGTHENING ACTIVITIES?

The time needed for capacity strengthening activities to reach their intended goal(s) will depend on multiple variables. There is no easy formula. Variables include those conditions that are inherent in any capacity strengthening program: the base level of capacity of the intended recipient(s) of the capacity strengthening (whether an individual, organization or system); the level of capacity desired; the amount of resources available for capacity strengthening and thus the intensity of effort of the capacity strengthening; and the level of buy-in for the capacity strengthening, both on the part of the capacity strengthening recipient and also in the recipient’s environment.

In addition, external factors that cannot be predicted at the outset may influence the time horizon: staff turnover, shifts in leadership, significant changes in the workload of the capacity strengthening recipients, political disruption, a crises that demands that capacity strengthening and human resources be diverted for some period of time (the earthquake in Nepal or avian influenza in Egypt), among others. Given all these variables, a capacity strengthening program can be as short as a few hours, or as long as several years.

Given that capacity strengthening is both science and art, it is critical that all stakeholders, including capacity strengthening recipients, capacity strengthening providers, donors, government and others have open communication and share clear expectations of the goals of the capacity strengthening and what is realistically needed to get there. When obstacles or opportunities arise that may shift the time horizon, all stakeholders should be made aware so as to manage expectations and agree on a revised time line or to reassess goals if necessary.

DEFINING AND MEASURING CAPACITY STRENGTHENING SUCCESS

Given the varied and complex nature of programs, success will look different in each case. The definition of success can highlight both the process as well the outcomes of the capacity strengthening efforts. At the most basic level, measuring success around process can center on the achievement of specific activities and outputs, at any of the levels. An even greater measure of success is achieved when a capacity strengthening program can demonstrate the link between its capacity strengthening efforts and certain outcomes, whether intended or unintended.

The challenge lies in measuring outcomes tied to the level at which the specific activity is intervening. For example, a training activity that aims to strengthen SBCC competencies at the individual level can measure success with pre- and post-tests conducted over different points in time. When looking to assess organizational or system-level change, however, readily available tools and traditional monitoring and evaluation approaches are not able to accurately measure success. Depending on the type and scope of the activity, measuring outcomes in these instances call for approaches such as program/organizational documentation review, expert assessment of work outputs, interviews with key stakeholders about observed changes, etc.

Defining outcomes and determining the most appropriate ways to measure success should be a negotiated process among stakeholders, including the recipient(s) of the capacity strengthening, the capacity strengthening provider and the donor. It is critical that a common understanding of success be established at the beginning of the project. For example, if a two-year program is developing the capacity of a fledgling SBCC organization with little experience, then the level of capacity achieved will be well below what might define success for an organization starting at a much higher level of capacity.

The capacity strengthening ecosystem framework illustrates the complex non-linear nature of SBCC capacity strengthening. There are multiple stakeholders at various levels of intervention. In addition, the context of the specific geographic location where capacity strengthening activities take place create unique environments with distinct challenges and realities. As a result, as mentioned above, the task of measuring outcomes resulting from SBCC capacity strengthening requires different approaches than traditional monitoring and evaluation.

One novel approach for monitoring and evaluation of SBCC capacity strengthening is Outcome Harvesting (OH) – a participatory method of assessing programmatic success by identifying both intended and unintended results of programs. OH is well-suited to capture project results in complex situations where the cause and effect of an intervention is unknown or agreement among many stakeholders must be reached in order to finalize and continually adapt an intervention’s strategy. OH is ideal for considering multiple perspectives to decide who and what has changed since the start of an intervention, when and where change has occurred, and how the change came about.

Download a Print Version of the SBCC Capacity Ecosystem

Learn More

Socio-Ecological Model

Experiential Learning Theory

http://www.learning-theories.com/experiential-learning-kolb.html for a brief summary

Kolb, D.(1999) Experiential Learning Theory: Previous Research and New Directions.

Institutional Economic Theory

Complexity Theory (as applied to capacity strengthening)

Woodhill, J. 2010. Capacities for Institutional Innovation: A Complexity Perspective.

Outcome Harvesting

RESULTS

RESULTS