Unpacking the Black Box: What Does Applied Behavioral Economics Actually Look Like?

Behavioral economics (BE) is a hot topic these days. You may have heard that the World Bank recently launched a team dedicated to applying behavioral insights in their work. This unit follows the lead of similar units within the UK, the US, and many other federal and local governments around the globe. Some of the largest funders of international development initiatives are calling for approaches informed by behavioral economics. Mainstream media has even taken an interest, as evidenced by best-selling books like Nudge, Scarcity and Thinking, Fast and Slow.

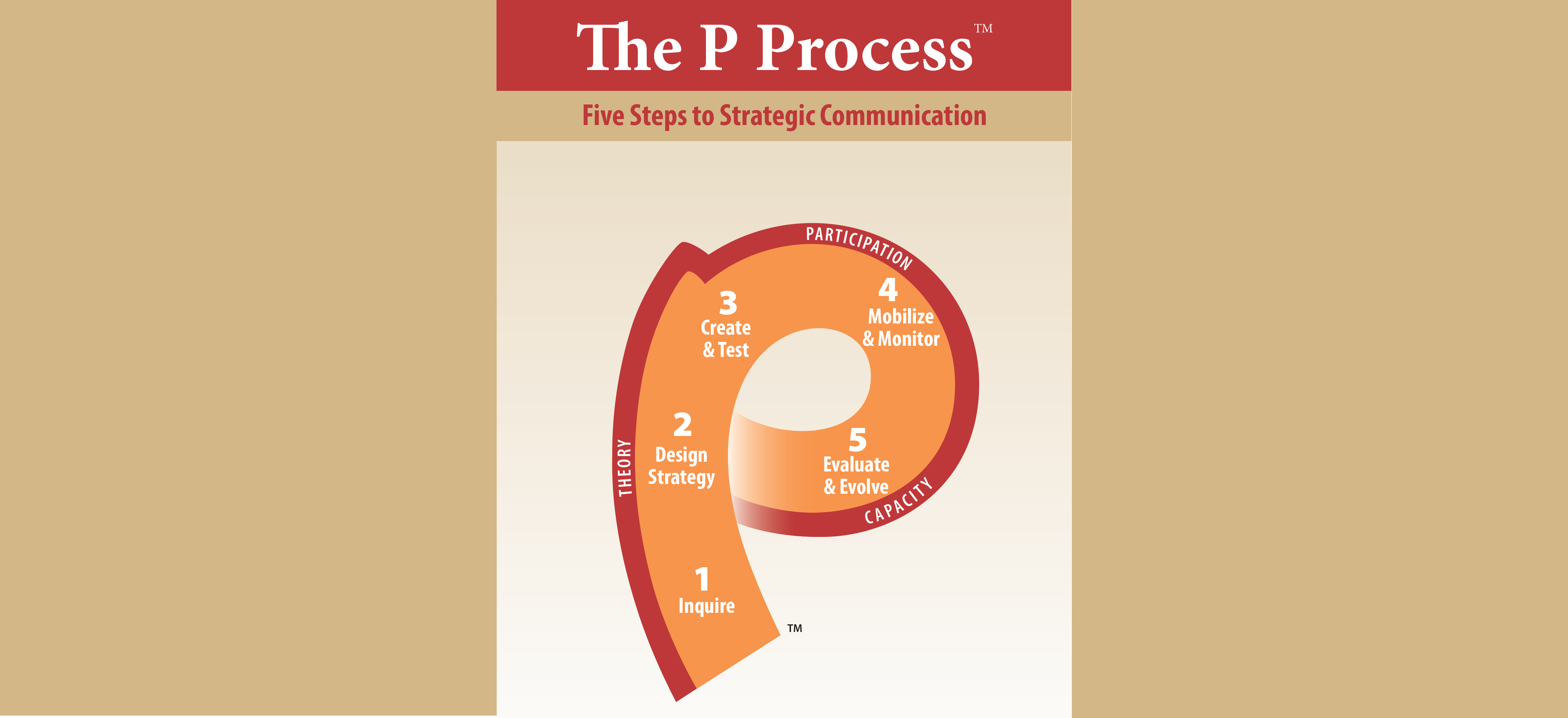

There is a growing demand for BE approaches to social challenges, but what does this actually mean in practice? In a previous blog we explored how a BE-informed approach can complement traditional behavior-change approaches like social and behavior change communication (SBCC). In this post we’ll explore the nuts and bolts of what this looks like in practice.

There is a growing demand for BE approaches to social challenges, but what does this actually mean in practice? In a previous blog we explored how a BE-informed approach can complement traditional behavior-change approaches like social and behavior change communication (SBCC). In this post we’ll explore the nuts and bolts of what this looks like in practice.

Over the last two years ideas42 has been applying BE to tackle behavioral challenges in international family planning and reproductive health (FP/RH). We’ve explored challenges ranging from family planning uptake during integrated immunization and family planning services to discontinuation of modern method contraceptives. While the contexts and drivers of these challenges are diverse, our approach has been consistent. Our process starts with understanding the problem and its drivers, before we jump into designing solutions. This means first questioning assumptions, coming up with hypotheses and collecting evidence. We then design behaviorally informed solutions to address the challenge and improve outcomes. Finally, wherever possible, we rigorously assess the impact of the implemented solutions.

In an ongoing project in Nepal, ideas42, in collaboration with Sunaulo Parivar Nepal (SPN), a private sector implementing partner of Marie Stopes International, applied a behavioral economics approach to increase post-abortion family planning (PAFP) uptake within SPN’s 36 sexual and reproductive health (SRH) centers.

We first sought to define the problem at hand and narrow the focus of our subsequent diagnosis and design work. Research from Nepal (Rocca et al, 2014) suggests that Nepali women receiving abortions desire to limit or space births using contraception, but most are not following through on this intention. To increase PAFP uptake rates, we conducted direct observations of the abortion client processes and counseling, interviewed clients and family planning service providers and counselors, and analyzed SPN service data to confirm when, where, how and to what extent PAFP uptake was occurring. While defining the problem, it is critical that we remain completely agnostic as to what is driving PAFP uptake and how it could be improved; we don’t want our own assumptions and biases to cloud our understanding of the problem or limit our exploration of potential solutions. For example, if we assume that low uptake is caused by people not knowing enough about contraceptive methods, then we will see the problem as a lack of information and may miss other critical challenges.

Next, we dug deeper to diagnose current PAFP rates. Drawing from our understanding of SPN’s context and processes, as well as from our behavioral economics expertise on the influences of human decision-making and behavior, we generated several hypotheses for the drivers of PAFP uptake in this context. We then conducted additional qualitative exploration, specifically designed to confirm, reject or refine our hypotheses. Take, for example, the hypothesis that providers were not motivated to provide high quality services. Our fieldwork revealed that the SRH providers at SPN were well-trained and informed on counseling clients on contraceptive options, and they were intrinsically motivated to offer high-quality services. Yet, we uncovered a critical insight: existing performance feedback processes made it difficult for providers to assess their own performance and place it in the context of other providers’ performance. Without this information, providers did not know how well they were delivering services and if there was room for improvement. The core behavioral concept at play here is the process of “social comparison.” As humans, when we seek to assess our performance or behavior, we will often look to social cues or markers to determine whether our performance is acceptable or needing improvement. We do this by directly observing others’ actions or reactions to determine what behavior is socially appropriate or acceptable, or by seeking out objective information that allows us to assess our own opinions and abilities. At SPN, because providers had no quantitative information on PAFP uptake at other centers, they were unable to make a social comparison and objectively assess their own performance.

We used the insights uncovered in our diagnosis to design a low-cost behavioral solution to improve the consistency with which providers counseled clients on family planning methods. Together with SPN we identified three intervention designs intended to provide a social comparison of PAFP uptake. We user-tested these interventions with service providers, team leaders and counselors from SPN centers. Providers contributed critical feedback to the intervention designs and played a key role in selecting which of the three interventions would be implemented and tested. We ultimately decided to give providers numerical information on their center’s PAFP uptake compared to PAFP uptake at similar centers. This information would be communicated through posters that would be discussed during monthly team meetings. The critical components of this design were that, 1) quantitative indicators (monthly PAFP uptake) of center performance were provided, 2) comparisons were made amongst comparable facilities (i.e. similar in volume of patient flow, numbers of center staff, and PAFP uptake), 3) a text box was included that cued positive or negative reinforcement, depending on where the center’s performance fell within it’s comparison group, and 4) the information was presented during monthly team meetings, a setting that enabled providers to collaboratively discuss performance and share strategies for improvement.

We are currently testing the effectiveness of this social comparison intervention through a center-level, stepped-wedge randomized control trial. You can learn more about the behavioral economics approach taken in this work in a recent publication in Frontiers in Public Health.

This collaboration in Nepal is just one example of how behavioral economics can be applied in FP/RH. Behavioral economics is a powerful lens to approach persistent problems anew and uncover powerfully simple solutions. To learn more about our other FP/RH projects and explore applied behavioral economics in other domains, check out our website at www.ideas42.org.

Leave a Reply

Want to join the discussion?Feel free to contribute!